synthcity.plugins.core.dataloader module

- class DataLoader(data_type: str, data: Any, static_features: List[str] = [], temporal_features: List[str] = [], sensitive_features: List[str] = [], important_features: List[str] = [], outcome_features: List[str] = [], train_size: float = 0.8, random_state: int = 0, **kwargs: Any)

Bases:

object

Base class for all data loaders.

- Each derived class must implement the following methods:

unpack() - a method that unpacks the columns and returns features and labels (X, y). decorate() - a method that creates a new instance of DataLoader by decorating the input data with the same DataLoader properties (e.g. sensitive features, target column, etc.) dataframe() - a method that returns the pandas dataframe that contains all features and samples numpy() - a method that returns the numpy array that contains all features and samples info() - a method that returns a dictionary of DataLoader information __len__() - a method that returns the number of samples in the DataLoader satisfies() - a method that tests if the current DataLoader satisfies the constraint provided match() - a method that returns a new DataLoader where the provided constraints are met from_info() - a static method that creates a DataLoader from the data and the information dictionary sample() - returns a new DataLoader that contains a random subset of N samples drop() - returns a new DataLoader with a list of columns dropped __getitem__() - getting features by names __setitem__() - setting features by names train() - returns a DataLoader containing the training set test() - returns a DataLoader containing the testing set fillna() - returns a DataLoader with NaN filled by the provided number(s)

If any method implementation is missing, the class constructor will fail.

- Constructor Args:

- data_type: str

The type of DataLoader, currently supports “generic”, “time_series” and “survival”.

- data: Any

The object that contains the data

- static_features: List[str]

List of feature names that are static features (as opposed to temporal features).

- temporal_features:

List of feature names that are temporal features, i.e. observed over time.

- sensitive_features: List[str]

Name of sensitive features.

- important_features: List[str]

Default: None. Only relevant for SurvivalGAN method.

- outcome_features:

The feature name that provides labels for downstream tasks.

- abstract property columns: list

- compress() Tuple[synthcity.plugins.core.dataloader.DataLoader, Dict]

- abstract compression_protected_features() list

- abstract dataframe() pandas.core.frame.DataFrame

- decode(encoders: Dict[str, Any]) synthcity.plugins.core.dataloader.DataLoader

- decompress(context: Dict) synthcity.plugins.core.dataloader.DataLoader

- abstract decorate(data: Any) synthcity.plugins.core.dataloader.DataLoader

- domain() Optional[str]

- abstract drop(columns: list = []) synthcity.plugins.core.dataloader.DataLoader

- encode(encoders: Optional[Dict[str, Any]] = None) Tuple[synthcity.plugins.core.dataloader.DataLoader, Dict]

- abstract fillna(value: Any) synthcity.plugins.core.dataloader.DataLoader

- abstract static from_info(data: pandas.core.frame.DataFrame, info: dict) synthcity.plugins.core.dataloader.DataLoader

- abstract get_fairness_column() Union[str, Any]

- hash() str

- abstract info() dict

- abstract is_tabular() bool

- abstract match(constraints: synthcity.plugins.core.constraints.Constraints) synthcity.plugins.core.dataloader.DataLoader

- abstract numpy() numpy.ndarray

- raw() Any

- abstract sample(count: int, random_state: int = 0) synthcity.plugins.core.dataloader.DataLoader

- abstract satisfies(constraints: synthcity.plugins.core.constraints.Constraints) bool

- abstract property shape: tuple

- abstract test() synthcity.plugins.core.dataloader.DataLoader

- abstract train() synthcity.plugins.core.dataloader.DataLoader

- type() str

- abstract unpack(as_numpy: bool = False, pad: bool = False) Any

- property values: numpy.ndarray

- class GenericDataLoader(data: Union[pandas.core.frame.DataFrame, list, numpy.ndarray], sensitive_features: List[str] = [], important_features: List[str] = [], target_column: Optional[str] = None, fairness_column: Optional[str] = None, domain_column: Optional[str] = None, random_state: int = 0, train_size: float = 0.8, **kwargs: Any)

Bases:

synthcity.plugins.core.dataloader.DataLoader

Data loader for generic tabular data.

- Constructor Args:

- data: Union[pd.DataFrame, list, np.ndarray]

The dataset. Either a Pandas DataFrame or a Numpy Array.

- sensitive_features: List[str]

Name of sensitive features.

- important_features: List[str]

Default: None. Only relevant for SurvivalGAN method.

- target_column: Optional[str]

The feature name that provides labels for downstream tasks.

- fairness_column: Optional[str]

Optional fairness column label, used for fairness benchmarking.

- domain_column: Optional[str]

Optional domain label, used for domain adaptation algorithms.

- random_state: int

Defaults to zero.

Example

>>> from sklearn.datasets import load_diabetes >>> from synthcity.plugins.core.dataloader import GenericDataLoader >>> X, y = load_diabetes(return_X_y=True, as_frame=True) >>> X["target"] = y >>> # Important note: preprocessing data with OneHotEncoder or StandardScaler is not needed or recommended. >>> # Synthcity handles feature encoding and standardization internally. >>> loader = GenericDataLoader(X, target_column="target", sensitive_columns=["sex"],)

- property columns: list

- compress() Tuple[synthcity.plugins.core.dataloader.DataLoader, Dict]

- compression_protected_features() list

- dataframe() pandas.core.frame.DataFrame

- decode(encoders: Dict[str, Any]) synthcity.plugins.core.dataloader.DataLoader

- decompress(context: Dict) synthcity.plugins.core.dataloader.DataLoader

- decorate(data: Any) synthcity.plugins.core.dataloader.DataLoader

- domain() Optional[str]

- drop(columns: list = []) synthcity.plugins.core.dataloader.DataLoader

- encode(encoders: Optional[Dict[str, Any]] = None) Tuple[synthcity.plugins.core.dataloader.DataLoader, Dict]

- fillna(value: Any) synthcity.plugins.core.dataloader.DataLoader

- static from_info(data: pandas.core.frame.DataFrame, info: dict) synthcity.plugins.core.dataloader.GenericDataLoader

- get_fairness_column() Union[str, Any]

- hash() str

- info() dict

- is_tabular() bool

- match(constraints: synthcity.plugins.core.constraints.Constraints) synthcity.plugins.core.dataloader.DataLoader

- numpy() numpy.ndarray

- raw() Any

- sample(count: int, random_state: int = 0) synthcity.plugins.core.dataloader.DataLoader

- satisfies(constraints: synthcity.plugins.core.constraints.Constraints) bool

- property shape: tuple

- type() str

- unpack(as_numpy: bool = False, pad: bool = False) Any

- property values: numpy.ndarray

- class ImageDataLoader(data: Union[torch.utils.data.dataset.Dataset, Tuple[torch.Tensor, torch.Tensor]], height: int = 32, width: Optional[int] = None, random_state: int = 0, train_size: float = 0.8, **kwargs: Any)

Bases:

synthcity.plugins.core.dataloader.DataLoader

Data loader for generic image data.

- Constructor Args:

- data: torch.utils.data.Dataset or torch.Tensor

The image dataset or a tuple of (tensor images, tensor labels)

- random_state: int

Defaults to zero.

- height: int. Default = 32

Height to use internally

- width: Optional[int]

Optional width to use internally. If None, it is used the same value as height.

- train_size: float = 0.8

Train dataset ratio.

Example

>>> dataset = datasets.MNIST(".", download=True) >>> >>> loader = ImageDataLoader( >>> data=dataset, >>> train_size=0.8, >>> height=32, >>> width=w32, >>> )

- property columns: list

- compress() Tuple[synthcity.plugins.core.dataloader.DataLoader, Dict]

- compression_protected_features() list

- dataframe() pandas.core.frame.DataFrame

- decode(encoders: Dict[str, Any]) synthcity.plugins.core.dataloader.DataLoader

- decompress(context: Dict) synthcity.plugins.core.dataloader.DataLoader

- decorate(data: Any) synthcity.plugins.core.dataloader.DataLoader

- domain() Optional[str]

- drop(columns: list = []) synthcity.plugins.core.dataloader.DataLoader

- encode(encoders: Optional[Dict[str, Any]] = None) Tuple[synthcity.plugins.core.dataloader.DataLoader, Dict]

- fillna(value: Any) synthcity.plugins.core.dataloader.DataLoader

- static from_info(data: torch.utils.data.dataset.Dataset, info: dict) synthcity.plugins.core.dataloader.ImageDataLoader

- get_fairness_column() None

Not implemented for ImageDataLoader

- hash() str

- info() dict

- is_tabular() bool

- match(constraints: synthcity.plugins.core.constraints.Constraints) synthcity.plugins.core.dataloader.DataLoader

- numpy() numpy.ndarray

- raw() Any

- sample(count: int, random_state: int = 0) synthcity.plugins.core.dataloader.DataLoader

- satisfies(constraints: synthcity.plugins.core.constraints.Constraints) bool

- property shape: tuple

- type() str

- unpack(as_numpy: bool = False, pad: bool = False) Any

- property values: numpy.ndarray

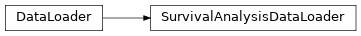

- class SurvivalAnalysisDataLoader(data: pandas.core.frame.DataFrame, time_to_event_column: str, target_column: str, time_horizons: list = [], sensitive_features: List[str] = [], important_features: List[str] = [], fairness_column: Optional[str] = None, random_state: int = 0, train_size: float = 0.8, **kwargs: Any)

Bases:

synthcity.plugins.core.dataloader.DataLoader

Data Loader for Survival Analysis Data

- Constructor Args:

- data: Union[pd.DataFrame, list, np.ndarray]

The dataset. Either a Pandas DataFrame or a Numpy Array.

- time_to_event_column: str

Survival Analysis specific time-to-event feature

- target_column: str

The outcome: event or censoring.

- sensitive_features: List[str]

Name of sensitive features.

- important_features: List[str]

Default: None. Only relevant for SurvivalGAN method.

- target_column: str

The feature name that provides labels for downstream tasks.

- fairness_column: Optional[str]

Optional fairness column label, used for fairness benchmarking.

- domain_column: Optional[str]

Optional domain label, used for domain adaptation algorithms.

- random_state: int

Defaults to zero.

- train_size: float

The ratio to use for train splits.

Example

>>> TODO

- property columns: list

- compress() Tuple[synthcity.plugins.core.dataloader.DataLoader, Dict]

- compression_protected_features() list

- dataframe() pandas.core.frame.DataFrame

- decode(encoders: Dict[str, Any]) synthcity.plugins.core.dataloader.DataLoader

- decompress(context: Dict) synthcity.plugins.core.dataloader.DataLoader

- decorate(data: Any) synthcity.plugins.core.dataloader.DataLoader

- domain() Optional[str]

- drop(columns: list = []) synthcity.plugins.core.dataloader.DataLoader

- encode(encoders: Optional[Dict[str, Any]] = None) Tuple[synthcity.plugins.core.dataloader.DataLoader, Dict]

- fillna(value: Any) synthcity.plugins.core.dataloader.DataLoader

- static from_info(data: pandas.core.frame.DataFrame, info: dict) synthcity.plugins.core.dataloader.DataLoader

- get_fairness_column() Union[str, Any]

- hash() str

- info() dict

- is_tabular() bool

- match(constraints: synthcity.plugins.core.constraints.Constraints) synthcity.plugins.core.dataloader.DataLoader

- numpy() numpy.ndarray

- raw() Any

- sample(count: int, random_state: int = 0) synthcity.plugins.core.dataloader.DataLoader

- satisfies(constraints: synthcity.plugins.core.constraints.Constraints) bool

- property shape: tuple

- type() str

- unpack(as_numpy: bool = False, pad: bool = False) Any

- property values: numpy.ndarray

- class TimeSeriesDataLoader(temporal_data: List[pandas.core.frame.DataFrame], observation_times: List, outcome: Optional[pandas.core.frame.DataFrame] = None, static_data: Optional[pandas.core.frame.DataFrame] = None, sensitive_features: List[str] = [], important_features: List[str] = [], fairness_column: Optional[str] = None, random_state: int = 0, train_size: float = 0.8, seq_offset: int = 0, **kwargs: Any)

Bases:

synthcity.plugins.core.dataloader.DataLoader

Data Loader for Time Series Data

- Constructor Args:

- temporal data: List[pd.DataFrame]

The temporal data. A list of pandas DataFrames

- observation times: List

List of arrays mapping directly to index of each dataframe in temporal_data

- outcome: Optional[pd.DataFrame] = None

pandas DataFrame thatn can be anything (eg, labels, regression outcome)

- static_data: Optional[pd.DataFrame] = None

pandas DataFrame mapping directly to index of each dataframe in temporal_data

- sensitive_features: List[str]

Name of sensitive features

- important_features List[str]

Default: None. Only relevant for SurvivalGAN method

- fairness_column: Optional[str]

Optional fairness column label, used for fairness benchmarking.

- random_state: int

Defaults to zero.

Example

>>> TODO

- property columns: list

- compress() Tuple[synthcity.plugins.core.dataloader.DataLoader, Dict]

- compression_protected_features() list

- dataframe() pandas.core.frame.DataFrame

- decode(encoders: Dict[str, Any]) synthcity.plugins.core.dataloader.DataLoader

- decompress(context: Dict) synthcity.plugins.core.dataloader.DataLoader

- decorate(data: Any) synthcity.plugins.core.dataloader.DataLoader

- domain() Optional[str]

- drop(columns: list = []) synthcity.plugins.core.dataloader.DataLoader

- encode(encoders: Optional[Dict[str, Any]] = None) Tuple[synthcity.plugins.core.dataloader.DataLoader, Dict]

- static extract_masked_features(full_temporal_features: list) tuple

- fillna(value: Any) synthcity.plugins.core.dataloader.DataLoader

- filter_ids(ids_list: list) pandas.core.frame.DataFrame

- static from_info(data: pandas.core.frame.DataFrame, info: dict) synthcity.plugins.core.dataloader.DataLoader

- get_fairness_column() Union[str, Any]

- hash() str

- ids() list

- info() dict

- is_tabular() bool

- static mask_temporal_data(temporal_data: List[pandas.core.frame.DataFrame], observation_times: List, fill: Any = 0) Any

- match(constraints: synthcity.plugins.core.constraints.Constraints) synthcity.plugins.core.dataloader.DataLoader

- numpy() numpy.ndarray

- static pack_raw_data(static_data: Optional[pandas.core.frame.DataFrame], temporal_data: List[pandas.core.frame.DataFrame], observation_times: List, outcome: Optional[pandas.core.frame.DataFrame], fill: Any = nan, seq_offset: int = 0) pandas.core.frame.DataFrame

- static pad_and_mask(static_data: Optional[pandas.core.frame.DataFrame], temporal_data: List[pandas.core.frame.DataFrame], observation_times: List, outcome: Optional[pandas.core.frame.DataFrame], only_features: Any = False, fill: Any = 0) Any

- static pad_raw_data(static_data: Optional[pandas.core.frame.DataFrame], temporal_data: List[pandas.core.frame.DataFrame], observation_times: List, outcome: Optional[pandas.core.frame.DataFrame]) Any

- static pad_raw_features(static_data: Optional[pandas.core.frame.DataFrame], temporal_data: List[pandas.core.frame.DataFrame], observation_times: List, outcome: Optional[pandas.core.frame.DataFrame]) Any

- raw() Any

- property raw_columns: list

- sample(count: int, random_state: int = 0) synthcity.plugins.core.dataloader.DataLoader

- satisfies(constraints: synthcity.plugins.core.constraints.Constraints) bool

- static sequential_view(static_data: Optional[pandas.core.frame.DataFrame], temporal_data: List[pandas.core.frame.DataFrame], observation_times: List, outcome: Optional[pandas.core.frame.DataFrame], id_col: str = 'seq_id', time_id_col: str = 'seq_time_id', seq_offset: int = 0) Tuple[pandas.core.frame.DataFrame, dict]

- property shape: tuple

- type() str

- static unique_temporal_features(temporal_data: List[pandas.core.frame.DataFrame]) List

- static unmask_temporal_data(temporal_data: List[pandas.core.frame.DataFrame], observation_times: List, fill: Any = nan) Any

- unpack(as_numpy: bool = False, pad: bool = False) Any

- unpack_and_decorate(data: pandas.core.frame.DataFrame) synthcity.plugins.core.dataloader.DataLoader

- static unpack_raw_data(data: pandas.core.frame.DataFrame, info: dict) Tuple[Optional[pandas.core.frame.DataFrame], List[pandas.core.frame.DataFrame], List, Optional[pandas.core.frame.DataFrame]]

- property values: numpy.ndarray

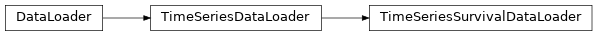

- class TimeSeriesSurvivalDataLoader(temporal_data: List[pandas.core.frame.DataFrame], observation_times: Union[List, numpy.ndarray, pandas.core.series.Series], T: Union[pandas.core.series.Series, numpy.ndarray], E: Union[pandas.core.series.Series, numpy.ndarray], static_data: Optional[pandas.core.frame.DataFrame] = None, sensitive_features: List[str] = [], important_features: List[str] = [], time_horizons: list = [], fairness_column: Optional[str] = None, random_state: int = 0, train_size: float = 0.8, seq_offset: int = 0, **kwargs: Any)

Bases:

synthcity.plugins.core.dataloader.TimeSeriesDataLoader

Data loader for Time series survival data

- Constructor Args:

- temporal_data: List[pd.DataFrame}

The temporal data. A list of pandas DataFrames.

- observation_times: List

List of arrays mapping directly to index of each dataframe in temporal_data

- T: Union[pd.Series, np.ndarray, pd.Series]

Time-to-event data

- E: Union[pd.Series, np.ndarray, pd.Series]

E is censored/event data

- static_data Optional[pd.DataFrame] = None

pandas DataFrame of static features for each subject

- sensitive_features: List[str]

Name of sensitive features

- important_features: List[str}

Default: None. Only relevant for SurvivalGAN method.

- fairness_column: Optional[str]

Optional fairness column label, used for fairness benchmarking.

- random_state. int

Defaults to zero.

Example

>>> TODO

- property columns: list

- compress() Tuple[synthcity.plugins.core.dataloader.DataLoader, Dict]

- compression_protected_features() list

- dataframe() pandas.core.frame.DataFrame

- decode(encoders: Dict[str, Any]) synthcity.plugins.core.dataloader.DataLoader

- decompress(context: Dict) synthcity.plugins.core.dataloader.DataLoader

- decorate(data: Any) synthcity.plugins.core.dataloader.DataLoader

- domain() Optional[str]

- drop(columns: list = []) synthcity.plugins.core.dataloader.DataLoader

- encode(encoders: Optional[Dict[str, Any]] = None) Tuple[synthcity.plugins.core.dataloader.DataLoader, Dict]

- static extract_masked_features(full_temporal_features: list) tuple

- fillna(value: Any) synthcity.plugins.core.dataloader.DataLoader

- filter_ids(ids_list: list) pandas.core.frame.DataFrame

- static from_info(data: pandas.core.frame.DataFrame, info: dict) synthcity.plugins.core.dataloader.DataLoader

- get_fairness_column() Union[str, Any]

- hash() str

- ids() list

- info() dict

- is_tabular() bool

- static mask_temporal_data(temporal_data: List[pandas.core.frame.DataFrame], observation_times: List, fill: Any = 0) Any

- match(constraints: synthcity.plugins.core.constraints.Constraints) synthcity.plugins.core.dataloader.DataLoader

- numpy() numpy.ndarray

- static pack_raw_data(static_data: Optional[pandas.core.frame.DataFrame], temporal_data: List[pandas.core.frame.DataFrame], observation_times: List, outcome: Optional[pandas.core.frame.DataFrame], fill: Any = nan, seq_offset: int = 0) pandas.core.frame.DataFrame

- static pad_and_mask(static_data: Optional[pandas.core.frame.DataFrame], temporal_data: List[pandas.core.frame.DataFrame], observation_times: List, outcome: Optional[pandas.core.frame.DataFrame], only_features: Any = False, fill: Any = 0) Any

- static pad_raw_data(static_data: Optional[pandas.core.frame.DataFrame], temporal_data: List[pandas.core.frame.DataFrame], observation_times: List, outcome: Optional[pandas.core.frame.DataFrame]) Any

- static pad_raw_features(static_data: Optional[pandas.core.frame.DataFrame], temporal_data: List[pandas.core.frame.DataFrame], observation_times: List, outcome: Optional[pandas.core.frame.DataFrame]) Any

- raw() Any

- property raw_columns: list

- sample(count: int, random_state: int = 0) synthcity.plugins.core.dataloader.DataLoader

- satisfies(constraints: synthcity.plugins.core.constraints.Constraints) bool

- static sequential_view(static_data: Optional[pandas.core.frame.DataFrame], temporal_data: List[pandas.core.frame.DataFrame], observation_times: List, outcome: Optional[pandas.core.frame.DataFrame], id_col: str = 'seq_id', time_id_col: str = 'seq_time_id', seq_offset: int = 0) Tuple[pandas.core.frame.DataFrame, dict]

- property shape: tuple

- type() str

- static unique_temporal_features(temporal_data: List[pandas.core.frame.DataFrame]) List

- static unmask_temporal_data(temporal_data: List[pandas.core.frame.DataFrame], observation_times: List, fill: Any = nan) Any

- unpack(as_numpy: bool = False, pad: bool = False) Any

- unpack_and_decorate(data: pandas.core.frame.DataFrame) synthcity.plugins.core.dataloader.DataLoader

- static unpack_raw_data(data: pandas.core.frame.DataFrame, info: dict) Tuple[Optional[pandas.core.frame.DataFrame], List[pandas.core.frame.DataFrame], List, Optional[pandas.core.frame.DataFrame]]

- property values: numpy.ndarray

- create_from_info(data: Union[pandas.core.frame.DataFrame, torch.utils.data.dataset.Dataset], info: dict) synthcity.plugins.core.dataloader.DataLoader

Helper for creating a DataLoader from existing information.